This blog post was published on Hortonworks.com before the merger with Cloudera. Some links, resources, or references may no longer be accurate.

The AWS S3 protocol is the defacto interface for modern object stores. Ozone-0.3.0-Alpha release adds S3 protocol as a first-class notion to Ozone. For all practical purposes, a user of S3 can start using Ozone without any change to code or tools.

A Bit of History

When we started building Ozone, there were a lot of discussions about Ozone merely being a clone of the S3. We chose to develop an independent Ozone layer to make it easy to support existing family of big data applications. S3 is an eventually consistent system which makes it hard to port existing applications to it; S3Guard is an excellent example of what users need to do to make S3 work for existing applications.

With Ozone, we chose strong consistency as a fundamental property of the system. Since Ozone is a consistent object store, it was easy to support the myriad of applications that work against HDFS and Hadoop ecosystem.

However, we did hear that not having S3 protocol support is a blocker for many people, both in private conversations and via tweets. Community and users in no uncertain terms did let us know that S3 protocol support is a must-have for Ozone.

With this release, Ozone-0.3.0-Alpha (our second release of Ozone), we address the need to have S3 protocol support in Ozone. The S3 protocol support offered by Ozone is strongly consistent, so you don’t need to run sidekick tools like S3Guard when running big data applications like Apache Spark, Apache YARN or Apache Hive.

Using S3 Gateway

If you are an existing Amazon S3 user, it is effortless to start using Ozone via S3 Gateway. You are only two steps away from using Ozone. First, you need to set up an access key in Ozone and second, you need to change the request endpoint to point to the S3 Gateway. Now you are all set.

Here is how a typical command will look like with the new endpoint while using AWS S3 CLI.

aws s3api --endpoint http://s3gateway.ozone.org:9878 create-bucket documents

This command creates a bucket called documents on Ozone cluster via AWS S3 tools. Please note, the only material difference here is that the endpoint is different from AWS.

If you are a power user of Apache Hadoop or Ozone, it is quite possible that you will want to access the S3 bucket via Ozone FS (a Hadoop compatible file system) or via S3A FS (another Hadoop compatible file system).

Both of these are possible, by pointing the Ozone FS or S3A FS to the Ozone path or S3 bucket. Once you have a Hadoop compatible file system running, it is trivial to run applications like Apache Spark or YARN.

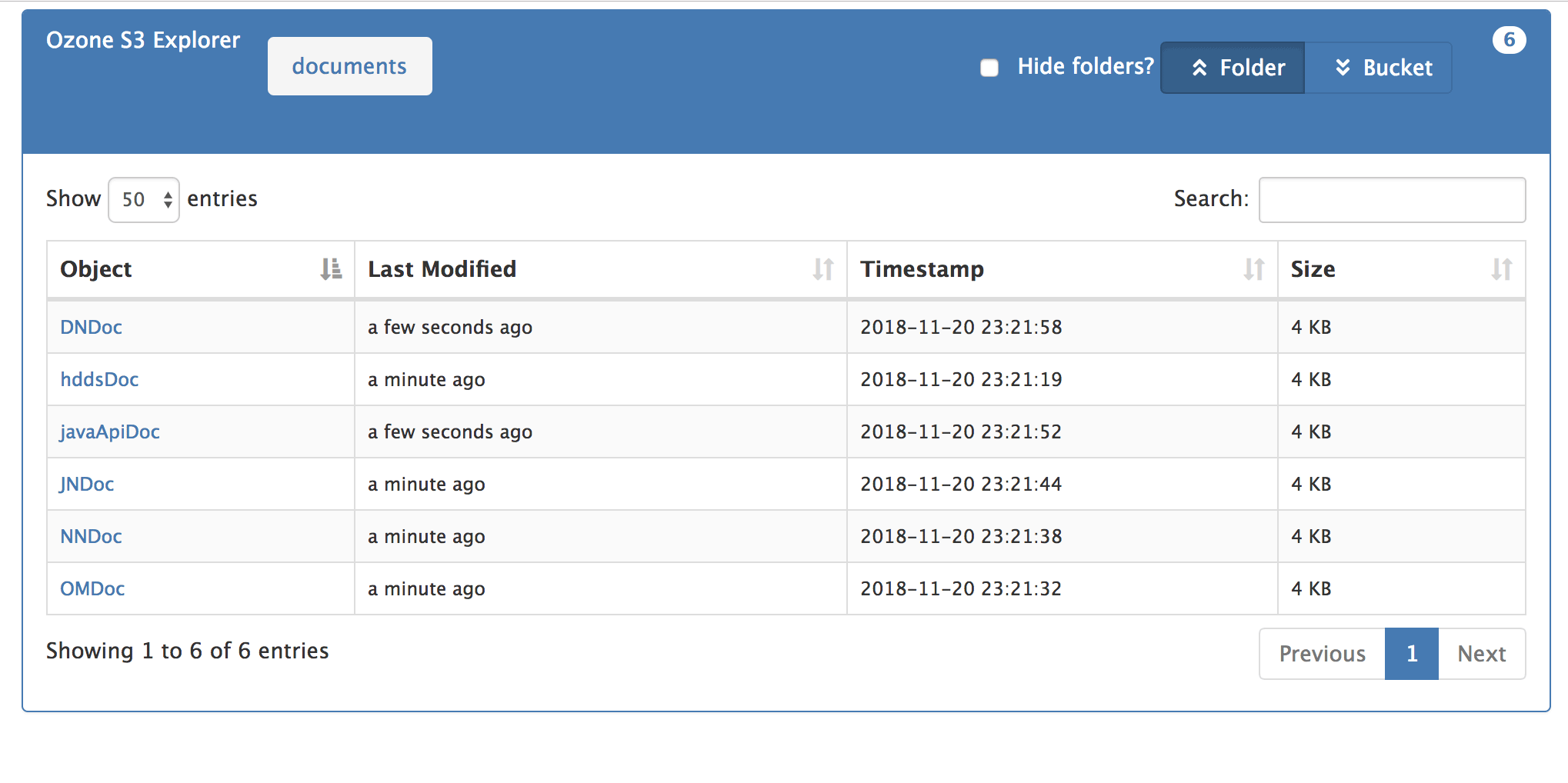

For more information on how to use S3 Gateway with Ozone, please look at the release documentation of Ozone-0.3.0-Alpha. We have also supported a basic no-frills bucket browser, based on the code from GitHub.

Here is a screenshot of the bucket browser in action.