This blog post was published on Hortonworks.com before the merger with Cloudera. Some links, resources, or references may no longer be accurate.

In this guest blog, Sumeet Kumar Agrawal, principal product manager for Big Data Edition product at Informatica, explains how Informatica’s Big Data Edition integrates with Hortonworks’ security projects, and how you can secure your big data projects.

Many companies already use big data technology like Hadoop for their production environments, so they can store and analyze petabytes of data including transactional data, weblog data, and social media content to gain better insights about their customers and business. Accenture found in a recent survey that 79 percent of respondents agree that “companies that do not embrace big data will lose their competitive position and may even face extinction.”

However, without proper security, your Big Data solution might very well open the doors to breaches that have the potential to cause serious reputational damage and legal repercussions. Hortonworks has led the community in bringing comprehensive security, in open source, to Apache Hadoop. Partners like Informatica can leverage security frameworks in Hadoop to enable users to securely bring in data from external sources, transform it and load it into the different Hadoop components.

Informatica Big Data Edition Integration with Hortonworks Security Projects

Informatica Big Data Edition’s codeless, visual development environment accelerates the ability of organizations to put their Hortonworks Data Platform clusters into production. As an alternative to implementing complex hand coded data movement and transformation, Informatica Big Data Edition enables high-performance data integration and quality pipelines that leverage the full power of each node of your Hadoop cluster with team-based visual tools that can be used by any ETL developer.

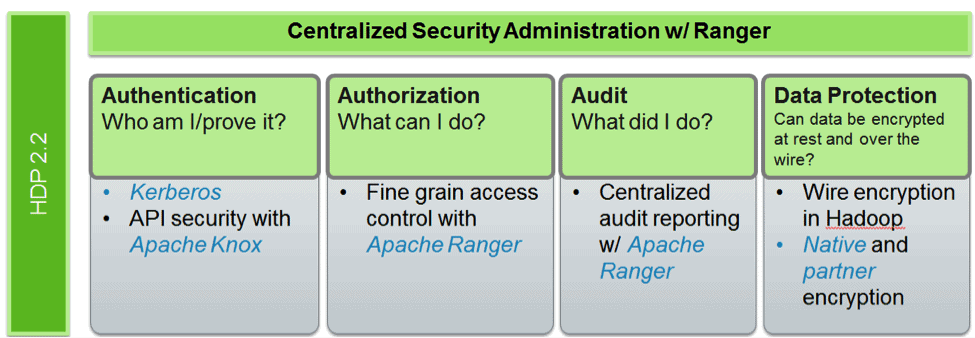

Informatica Big Data Edition integrates with security framework offered within HDP. The following figure shows the security offerings within the latest version of HDP:

Authentication

Kerberos

Kerberos is the most widely adopted authentication technology in the Big Data space. Kerberos is an authentication protocol for trusted hosts on untrusted networks. It provides secure authentication between clients/nodes/services. Starting with Ambari 2.0, Kerberos can be fully deployed using Ambari.

Informatica Big Data Edition integrates completely with Kerberos. A key aspect in Kerberos integration is the Kerberos Domain Controller (KDC). Informatica supports both Active Directory and MIT-based KDC.

KNOX:

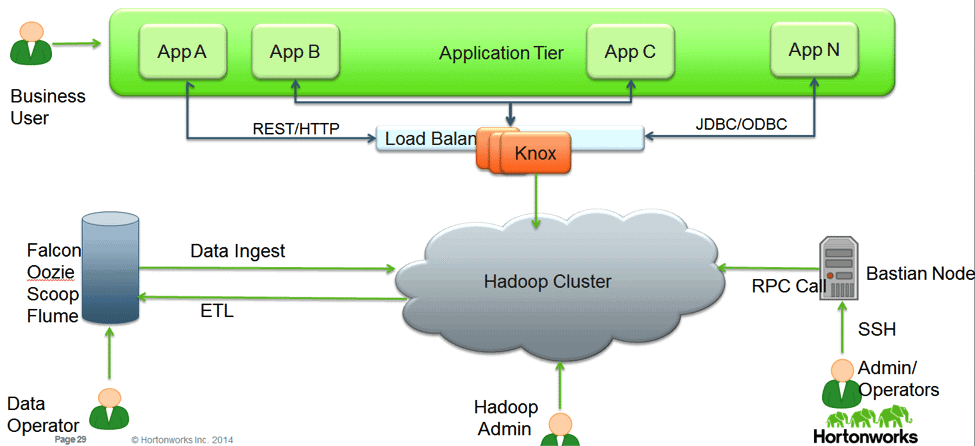

Knox is designed for applications for using REST APIs and using JDBC/ODBC over http to access or update data. It is not currently recommended for performance intensive applications such as Informatica. Knox is also not designed for RPC based access (Hadoop clients), in which case it is recommended to use Kerberos to authentication system and end users.

Here is a representative architecture where Knox is deployed over a Hadoop cluster. Knox, in this example, provides perimeter security for users accessing data through applications leveraging REST or HTTP based services.

Informatica Big Data Edition provides several rich functionalities like mass data ingestion, data preparation on Hadoop etc. KNOX may not be recommended for some of these functionalities. It is suggested to use Kerberos for authentication when Informatica ETL tools are being leveraged for data preparation on Hadoop.

Authorization

Apache Ranger

Apache Ranger offers a centralized security framework to manage fine-grained access control over Hadoop data access components like Apache Hive and Apache HBase. Within Hive, there are recommended best practices for setting up policies in Hiveserver2 and Hive CLI. You can find more details in this blog: https://hwxjojo.staging.wpengine.com/blog/best-practices-for-hive-authorization-using-apache-ranger-in-hdp-2-2/

For Hiveserver2, the Hive authorization does not allow the transform function for SQL std authorization (and thus Ranger). Informatica BDE has plans to support Hiveserver2 in near future and recommends customers use storage-based authorization for protecting metaserver when using Hive with Informatica. More details on storage based authorization can be found here.

Summary

In summary, Informatica and Hortonworks expand the big data security space and ensure that all organizations are secure while implementing their big data projects.

About the Author

Sumeet Kumar Agrawal is a Principal Product Manager for Big Data Edition product at Informatica. Based in the Bay Area, Sumeet has over 8 years of experience working on different Informatica technologies. Sumeet is responsible for defining Informatica’s big data product strategy, roadmap & working with customers to define their big data platform. Sumeet expertise includes Hadoop ecosystem, security, as well as development oriented technologies such as Java & web services. Sumeet is also responsible for evaluating Hadoop partner integration technologies for Informatica.

Informatica and its products are useful for providing data security and solutions for some complex problems. Also, on the other hand, Informatica seems very helpful for people who want to make it big in IT.

https://zappysys.com/blog/read-json-informatica-import-rest-api-json-file/

Thanks for sharing really informative tips its really helpful blog keep sharing.